The paper “I Know Why You Went to the Clinic: Risks and Realization of HTTPS Traffic Analysis” is revisiting the vulnerability of HTTPS encryption to traffic analysis.

The authors note that most secure web connections access directly a specific website that is requested by a user (as opposed to the setting where a user is observed using Tor, where the site is not known). There are challenges in doing this: different websites are usually quite distinct, while many sub-pages in the same website may share a common layout that makes them hard to distinguish through encryption. Additional complications are added by cache, cookies, and the use of Flash. However, this setting may be easier than the Tor case, in that the adversary could realistically enumerate all pages on a website.

The paper chose 10 sites, including health, finance, video rental, and civil liberties. Traces are collected from encrypted requests and replies, and some simple pre-processing is done. In particular, the traffic volumes are collated in bursts and only their sizes are stored. This is done to add robustness to jitter in the traffic. Traces are then clustered using k-means and Gaussians are used to model each of the clusters. The use of the Gaussian distribution provides some further resilience to noise in the size of resources.

A machine learning approach is then followed to select which features distinguish pages: a logistic regression model is used to learn weights that best separate pages. A Hidden Markov Model is then used to track full traces of used navigation through an encrypted web-site.

To evaluate the approach traces were collected using virtual machines. This allows for the full state of a VM to be frozen, to reset the machine state perfectly between trace collection. Traces were collected in sessions where user state was meant to be preserved, and otherwise a fresh VM could be spun to clear the state. This way different conditions of cache on/off, cookies and browsing different sites were used for the collection. Interestingly the effect of cache being on systematically reduced the accuracy of the attack by 10%-20%.

Finally, the paper presents a defense against traffic analysis. It is based on padding the bursts of traffic to some threshold in order to confuse the feature extraction stage. Since many thresholds are possible, and the maximum is chosen, subject to a size “inflation” budget. Other defenses were also tried and compared, and there is a substantial reduction in the accuracy of this attack.

The paper “A Predictive Differentially-Private Mechanism for Mobility Traces” looks at sanitizing mobility traces within the paradigm of differential privacy.

The idea is that a user wishes to use a location-based service. However, a user also wishes to maintain some privacy, so they will somewhat distort the reported location to hide their exact location. Of course, there is a clear trade-off between the utility, namely the accuracy of the reported location, and the privacy that can be maintained. In the case of this work the utility is related with the accuracy of the reported location compared with the real location.

At the heart of the system lies a definition of privacy based on “geo-indistinguishability”. The insight is that the locations “close-by” need to be indistinguishable, while locations very far apart may be distinguished. This offers a higher degree of information leakage than traditional differential privacy, but stands a chance to offer some utility for a single user trace.

A previously proposed mechanism offers such privacy, through first perturbing the center point of the user location (using a 2D generalization of the Laplacian distribution) and the requesting information about a larger mechanism. Its privacy degrades in a predictable manner when multiple observations are seen by the adversary. However, the authors note that real-world traces are very correlated with each other. For example a user stays in a cafe for a while, or they follow a certain path on a motorway. This insight may be used to reduce the noise introduced by the mechanism.

First a straw man mechanism is proposed: a prediction function is defined that tries to predict the next position to report from the previous published position. If the prediction yields a “good enough” location, subject to some threshold, the old prediction is used. If not a new prediction is used, at the cost to the privacy budget used. However, this simple scheme breaks the definition of geo-indistinguishability.

The strawman mechanism can be strengthened by doing a private test for the accuracy of the prediction, which in itself consumes some amount of the privacy budget. This results in information not leaking from the private prediction test on the data, and yields a geo-indistringuishable mechanism.

To make the mechanism more easy to integrate into location-based services a privacy budget manager is also devised. The manager is provided with a certain target privacy level and utility, and will configure the parameters of the mechanism to offer good utility subject to the constraints.

The evaluation was gone on the GeoLife traces from MSR, that were processed to simulate queries to an LBS, through sub-sampling.

Interestingly, a plug-in for Firefox and Chrome is available that implements the approach.

The paper “Quantifying the Effect of Co-location Information on Location Privacy” presented by Alexandra-Mihaela Olteanu discusses the important problem of co-location on location privacy in mobile networks.

The work notes that co-location information is widespread: for example users tag a number of people on pictures in social networks; face recognition is getting better; and devices record the devices around them. Interestingly, the information an adversary may infer from this co-location information is not hidden by traditional location privacy mechanisms. In fact this is an instance of privacy technologies not doing a very good job at hiding information leaking from interactions between users. When correlations between user activity is observable by an adversary, the adversary may combine the information from both to increase the accuracy of their inferences. In fact the actions of one user may have serious consequences on the privacy of another (a co-target).

This work attempts to quantify the degree of privacy lost from such combined inferences. It uses the location privacy model by Shokri et al (S&P). Users locations and traces are modeled using a Markov model and the adversary observes co-locations. Tracking a single user is the traditional inferences of the hidden Markov model. With co-locations the attack is more complex since the constraints implied by co-locations need to be honored. This increases the information of the adversary, but also increases the computational complexity of the attack. As a result the authors also propose a heuristic attack, that ignores some of the information / constraints but tries to keep a certain set of observations consistent.

The evaluation was performed on the GeoLife (MSR Asia) dataset. Interestingly co-location information can be inferred from such raw traces, to simulate a number of scenarios. For example one may vary the fraction of co-location events observed by the adversary or the location privacy mechanism used. The result show, unsurprisingly, that the adversary observing co-locations has a significant impact on privacy. They also show that observing a target with low privacy settings may lead to the compromise of a co-target with high privacy settings.

This is a very nice work. As someone in the audience pointed out, some of the computational complexities may be tackled through using sampling based estimations of the posterior distribution that is otherwise intractable. This is probably a worthy space for follow-up research. Relaxing the Markov mobility assumption (which makes the posterior computations even more complex) is another avenue for future work.

(The first session of PETS2014 deal with privacy for mobile devices. )

The paper “Exploiting Delay Patterns for User IPs Identification in Cellular Networks” observes that a significant number of mobile operators (over 50% of the market in North America and over 30% in Europe) assign public and routable IP addresses to mobile devices. That opens the devices to denial of service attacks, resource depletion, etc. But how can an adversary find the IP address of a specific user? If the adversary can observe a large fraction of the network this may be easy. The paper shows that even a small entity — like a single user — may track a specific user’s IP.

The tracking attack uses specificities of how mobile devices use the cell network. It turns out that the network stack may be in different states: “Idle”, “cell dispatch” or “cell fetch”, to conserve battery. Most of the time a device is in “Idle”. The authors notice that the push notification of IM services allows for indirectly injecting delay patterns on a target device. To receive the notification the device goes to a high everge reception / transmission state and for a time window (of 10-15 sec) the latency to reach the device drops. An adversary may use this insight to identify the IP of a device associated with an IM identifier.

So the attack methodology goes as follows: the attacker send an IM message, lowering the latency of the target device. Then they scan a range of devices by IP to detect if the latency is compatible with the hypothesis it has received a push notification. If not, the IP may be excluded. The attack is repeated a number of times to gain increasing certainty about the exact address of the target. Graphs suggest that about 10-20 iterations the space of possible device IPs becomes significantly smaller. However, for best result the repeated probes should be spread quite a few minutes apart. However, there is still some confusion between the target device and devices that always transmit. Eliminating those further improves the accuracy of the attack.

Simple countermeasures involve either firewalling devices to make it difficult to reach them, or use IPv6 making the space of possible addresses to scan unfeasibly large.

Increasingly traffic analysis makes use of complex statistical techniques, which are not (yet) part of the standard training of computer scientists at Cambridge (UK). As aresult I spend a lot of time reading textbooks like the excellent Bayesian Data Analysis(by Andrew Gelmanet al, who also maintains a blog) or Information Theory, Inference, and Learning Algorithms by David MacKay, who treats the subject more from a Computer Science perspective. The most fascinating aspect Bayesian statistics is that it is very easy (and even intrinsic to the analysis) to calculate one’s confidence in an inferred assertion.

One question that has been in my mind for some time is whether any Cambridge Colleges are better or worse on average than any others in Computer Science. Being `better’ is a very subjective judgement when it comes to education, but the primary issue that I wanted to study is whether one could claim that students of any particular college get consistently better or worse exam marks than others. Luckily the class marks for Part IB and Part II computer science were recently published (on a physical notice board — sorry no link) for the 2008 exams which could be subject to analysis to answer that question. But what would be the right way of doing this?

A simple minded approach would involve taking the proportion of students that got high marks (a First or Two-one) and rank colleges accordingly. Any college that is in the bottom half of this ranking is underperforming, while any college in the top half is doing well. Yet this approach gives no intuition about how significant the resutls are: different colleges have different number of students, and these numbers are in any case very small. It is likely that the resulting ranking is subject to random fluctiations.

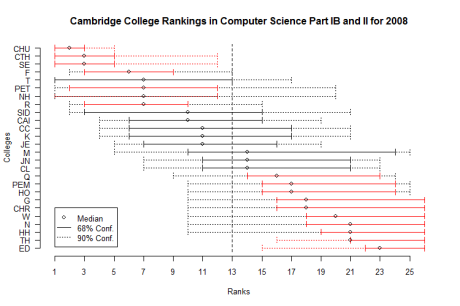

Instead I construct a simple Bayesian model for the ranking of colleges, which readily leads itself to calculating the confidence an observer can have about the assigned ranks. The resulting graph plots the rank of each college, along with the 68% (solid line) and 90% (dotted line) confidence interval over that rank. Red intervals indicate that the college is significantly over or below average.

Only a handful of colleges turn out to be significanlty (i.e. more than 90% of the time) better or worse in terms of exam resutls. For the record, Churchill, St Catherine’s, Selwyn, and Fitzwilliam are definitely above average. For all others the small number of students in a single year makes it impossible to tell if the colleges are systematically or randomly getting high or low marks. There is an interesting correlation between these results and physical proximity to the Computer Laboratory!

A few more details on the model used to derive the above graph: The model assumes that each college i has a (hidden) variable pi in [0,1] representing the probability that a student at this college receives a First or Two-one (observed) mark (each student independently receives such a mark with probability pi and a binomial distribution describes the number of marks above average that each college receives, according to their number of students.) Colleges are ranked according to their respective probabilities pi, from highest to lowest.

The problem is then transformed into a simple Bayesian inference exercise: given the observed marks we need to estimate the hidden pi, for each college. We model our belief about each pi as a Beta distribution, with a non-informative prior Beta(a=1,b=1). We update the distribution according to the observations, and it becomes for each college pi ~ Beta(a=1+#(High Marks), b=1+#(Low Marks)). We then draw samples from those distributions and rank the colleges. We repeat this procedure about 55,000 times (that should be large enough) and calculate the intervals in which the college ranks lie 68% and 90% of the time. A Python script generates the samples and an R script produces the plot.

Disclaimer: there is no causation implied between college and marks. This correlation could be due to colleges selecting students in particular ways, teaching them better, or ensuring that they leave university instead of getting low marks. There is no way of telling which apply from this analysis. It is totally wrong to judge colleges just based on such exam performance statistics, and as the results show, most of the time statistically meaningless.